Table of Contents >> Show >> Hide

- Why Use ChatGPT to Create a Dataset?

- Before You Start: Decide What Kind of Dataset You Need

- Step 1: Define the Dataset Objective

- Step 2: Design the Schema Before Generating Anything

- Step 3: Create a Small Set of Human-Written Seed Examples

- Step 4: Write a Prompt That Produces Structured, Varied Output

- Step 5: Generate in Small Batches, Not One Giant Avalanche

- Step 6: Review, Filter, and Deduplicate the Output

- Step 7: Add Edge Cases on Purpose

- Step 8: Balance the Dataset Without Making It Fake-Looking

- Step 9: Document the Dataset Like a Responsible Adult

- Step 10: Export the Dataset in the Right Format

- A Practical Example: Building a Sentiment Dataset with ChatGPT

- Common Mistakes to Avoid

- When ChatGPT Is a Great Fit, and When It Is Not

- Final Thoughts

- Field Notes: Real-World Experiences Using ChatGPT to Create Datasets

- Conclusion

If you have ever stared at a blank spreadsheet and thought, “I should build a dataset,” then immediately made coffee, checked email, and reconsidered every life choice that led you here, good news: ChatGPT can help. Quite a lot, actually.

Used well, ChatGPT can speed up dataset planning, schema design, labeling instructions, synthetic example generation, data cleaning, category balancing, and documentation. Used badly, it can produce a sparkling mountain of nonsense wrapped in perfect grammar. That is the deal. The trick is not to let the model become your dataset boss. It should be your fast, tireless assistant.

In this step-by-step guide, you will learn how to use ChatGPT to create a dataset that is useful, structured, and less likely to become a haunted CSV. We will cover when AI-generated data makes sense, how to design a schema, how to prompt for high-quality rows, how to review for bias and duplication, and how to package the final result for analysis, model evaluation, or fine-tuning.

Why Use ChatGPT to Create a Dataset?

Creating a dataset manually is slow, repetitive, and often expensive. ChatGPT can reduce that pain by helping you generate rows in a consistent format, rewrite messy text into structured fields, create edge cases, expand underrepresented classes, and draft documentation. It is especially helpful when you need:

- Training or evaluation examples for a narrow use case

- Synthetic data for prototyping

- Labeled examples for classification tasks

- Structured JSON or CSV rows from unstructured text

- Test cases that include ordinary, tricky, and edge-case inputs

That said, ChatGPT is not a magic data vending machine. It does not automatically know your ground truth. It can inherit bias, repeat patterns too neatly, invent facts, and overproduce “average-looking” examples while missing the weird stuff real users do at 2:13 a.m. So the best approach is simple: use ChatGPT for speed, then use human review for quality.

Before You Start: Decide What Kind of Dataset You Need

The biggest dataset mistake is starting with generation before defining purpose. That is like buying 400 file folders before deciding whether you run a law firm, a bakery, or a squirrel detective agency.

Ask These Four Questions First

- What is the dataset for? Training, evaluation, analytics, fine-tuning, search, or internal testing?

- What does one row represent? A customer message, product listing, support ticket, document chunk, or question-answer pair?

- What fields do you need? Text, label, intent, sentiment, language, source, timestamp, or difficulty level?

- What quality rules must every row follow? No duplicates, no private data, no toxic content, balanced class distribution, valid JSON, and so on.

If you skip this step, ChatGPT will still generate something. It just may not be something you can actually use.

Step 1: Define the Dataset Objective

Start with one tight sentence. Not a novel. Not a vibes-based mission statement. One sentence.

Example objective: “Create a dataset of 1,000 customer support messages labeled by intent for an e-commerce chatbot.”

That objective already tells ChatGPT several important things:

- The domain: e-commerce

- The row type: customer support message

- The target output: labeled intent

- The scale: 1,000 examples

You can make it even better by specifying the label set, language, tone, and edge cases.

Improved objective: “Create a dataset of 1,000 realistic U.S. English customer support messages for an online store, labeled with one of six intents: refund_request, order_tracking, address_change, damaged_item, payment_issue, or product_question.”

Step 2: Design the Schema Before Generating Anything

This is where smart dataset creators separate themselves from chaos merchants.

A schema is simply the structure of your data. If you want usable output, tell ChatGPT exactly what each row should contain.

Example JSON Schema for a Simple Dataset

Good schemas are boring in the best way. They are clear, constrained, and difficult to misread. Decide:

- Required fields

- Allowed labels or enums

- Field types such as string, integer, boolean, array

- Naming conventions

- Missing value rules

If you plan to use the dataset for fine-tuning later, structure matters even more. A messy dataset is not “creative.” It is just expensive in new ways.

Step 3: Create a Small Set of Human-Written Seed Examples

Do not ask ChatGPT to invent your entire dataset from thin air unless you enjoy surprise. Start with 10 to 30 seed examples written or reviewed by a human. These examples act like anchors. They show the model what “good” looks like.

Your seed examples should include:

- Typical rows

- Rare but important edge cases

- Short and long examples

- Examples that are easy to label

- Examples that are easy to confuse

This step matters because ChatGPT often mirrors the examples and instructions you provide. If your seed set is vague, repetitive, or sloppy, the generated dataset will salute and become vague, repetitive, and sloppy too.

Step 4: Write a Prompt That Produces Structured, Varied Output

Now the fun part. Prompting. Also known as the ancient art of telling a machine exactly what you want instead of saying “make it good” and hoping destiny helps.

A Strong Prompt Template

This prompt works because it does five useful things at once:

- Defines the task

- Specifies the format

- Restricts the labels

- Sets quality rules

- Provides examples

If the output starts drifting, tighten the prompt. Add negative instructions such as “do not reuse sentence openings” or “do not produce generic filler like ‘I need help with my order.’”

Step 5: Generate in Small Batches, Not One Giant Avalanche

Yes, it is tempting to ask for 10,000 rows in one shot. No, that is usually not the best move.

Generate in batches of 25, 50, or 100 rows. Review each batch. Adjust your prompt. Then continue. This gives you control over quality, diversity, and cost.

Small batches help you catch problems early, such as:

- Label imbalance

- Repetitive wording

- Schema drift

- Made-up facts

- Overly clean, robotic language

Think of batch generation like cooking pancakes. The first one is a test pancake. It may be ugly. That is normal. Do not serve the first pancake to production.

Step 6: Review, Filter, and Deduplicate the Output

This is the step people skip when they are in a hurry, and it is also the step that determines whether your dataset is useful or merely enthusiastic.

What to Check

- Validity: Does every row follow the schema?

- Consistency: Are labels applied the same way throughout?

- Diversity: Do examples vary in wording, context, and difficulty?

- Duplication: Are rows near-identical with tiny cosmetic edits?

- Bias: Are certain groups, tones, or scenarios overrepresented?

- Privacy: Did any row accidentally include personal or sensitive details?

You can even use ChatGPT for part of this review. Ask it to flag duplicates, spot inconsistent labels, or identify examples that look too generic. Just remember: letting a model grade its own homework is helpful, but not sufficient. A human should still sample and audit the results.

Step 7: Add Edge Cases on Purpose

Most weak datasets have one thing in common: they are full of clean, obvious examples and short on the messy stuff. Real users misspell words, ramble, switch topics, write in fragments, and sometimes type like the keyboard has offended them personally.

So ask ChatGPT for edge cases explicitly.

This makes your dataset stronger for evaluation and more realistic for deployment.

Step 8: Balance the Dataset Without Making It Fake-Looking

Balancing classes is useful, especially for training and testing. But there is a catch: perfectly balanced data can become suspiciously tidy. Real-world data is often lopsided.

A practical approach is to create two versions:

- Training set: More balanced, so the model sees enough of each class

- Evaluation set: More realistic, so performance reflects real use

ChatGPT is great at augmenting weak classes. If you only have 40 “payment_issue” examples and 300 “order_tracking” examples, ask for more payment issues with varied language, channel, and difficulty. Just do not let the new class become a copy-paste parade wearing different hats.

Step 9: Document the Dataset Like a Responsible Adult

Dataset documentation is not glamorous, but it saves enormous confusion later. Future-you will either thank present-you or write strongly worded comments in a notebook cell.

Include This Documentation

- Dataset purpose

- Source type: human, synthetic, or mixed

- Field definitions

- Label definitions

- Generation method

- Review process

- Known limitations

- Privacy and bias considerations

You can ask ChatGPT to draft this “dataset card” for you based on your schema and workflow. Then edit it with actual details. Do not let the model invent your governance story. That is how audits become emotionally interesting.

Step 10: Export the Dataset in the Right Format

Different uses need different formats.

- CSV: Good for analytics and spreadsheet-friendly workflows

- JSON: Good for nested fields and application pipelines

- JSONL: Good for many model training and fine-tuning workflows

- Parquet: Good for large-scale data pipelines

If your goal is fine-tuning, make sure each line follows the required training format. If your goal is evaluation, make sure you preserve expected output fields, ground truth labels, and metadata like difficulty or source type.

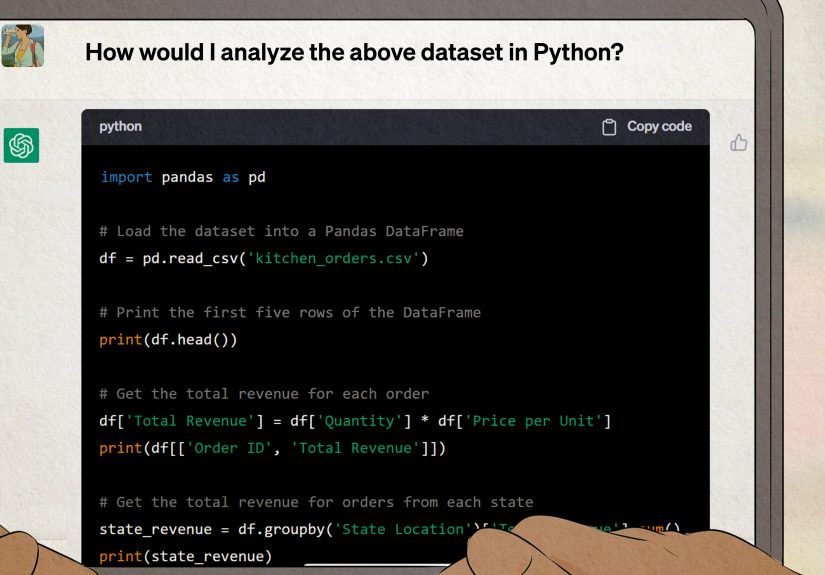

A Practical Example: Building a Sentiment Dataset with ChatGPT

Let’s say you want a sentiment analysis dataset for product reviews.

Your Goal

Create 600 product reviews labeled as positive, neutral, or negative.

Your Fields

- review_id

- review_text

- sentiment

- product_category

- review_length

Your Workflow

- Write 15 seed reviews by hand

- Ask ChatGPT to generate 50 rows at a time

- Require valid JSON output

- Review for repetition and label consistency

- Add edge cases like sarcasm, mixed opinions, and vague praise

- Deduplicate and normalize formatting

- Export to CSV and JSONL

That is a sensible use of ChatGPT. You are not trusting it blindly. You are using it as a structured content generator inside a controlled workflow.

Common Mistakes to Avoid

- Generating too much too soon: Big batches hide bad patterns.

- Skipping seed examples: The model needs anchors.

- Ignoring privacy: Synthetic does not automatically mean safe.

- Using only AI-generated test data: You still need trusted human-reviewed examples.

- Forgetting documentation: A mysterious dataset is a future problem.

- Assuming realism: Text that sounds human is not always representative.

When ChatGPT Is a Great Fit, and When It Is Not

Great Fit

- Creating synthetic examples for prototyping

- Expanding underrepresented classes

- Converting messy notes into structured fields

- Drafting labels, rubrics, and dataset cards

- Building evaluation sets with varied edge cases

Not a Great Fit

- Generating authoritative ground truth for high-stakes domains without expert review

- Replacing real observational data when distribution fidelity matters deeply

- Creating datasets involving private, regulated, or highly sensitive information without proper controls

- Producing factual datasets where hallucinations would quietly poison the whole project

Final Thoughts

If you want to use ChatGPT to create a dataset, the winning formula is simple: define the objective, design the schema, provide seed examples, generate in batches, review aggressively, document everything, and keep a human in the loop.

In other words, do not ask ChatGPT to replace your data process. Ask it to accelerate your data process.

That difference matters. One approach gives you a usable dataset. The other gives you 4,000 rows of polished confusion and a sudden desire to take a long walk.

When used carefully, ChatGPT can be an excellent dataset creation partner. It is fast, flexible, and surprisingly good at producing structured content when given clear constraints. But quality still comes from the workflow around the model: your schema, your examples, your checks, and your judgment. The robot can help build the bricks. You still need to inspect the house.

Field Notes: Real-World Experiences Using ChatGPT to Create Datasets

One of the most common experiences people report when using ChatGPT for dataset creation is that the first results look amazing right up until you inspect them closely. The formatting is neat. The labels seem clean. The rows are readable. You feel powerful for about seven minutes. Then you notice that 18 examples start with almost the same sentence, half the “hard” examples are not actually hard, and the supposedly diverse data somehow sounds like it was written by the same very polite ghost. That moment is important because it teaches the main lesson of AI-assisted data work: polish is not proof of quality.

Another repeated lesson is that prompt quality changes everything. People often begin with broad requests like “make me a dataset of support tickets,” and the result is bland. Once they switch to tighter instructions with schema rules, approved labels, banned content, sample rows, and explicit edge cases, the output improves dramatically. It is not magic. It is constraint. ChatGPT tends to perform better when the task is framed like a system, not a wish.

Many users also discover that ChatGPT is especially useful in the middle of the workflow, not just at the beginning. Yes, it can generate new rows, but it is also handy for rewriting fields into a consistent format, flagging possible duplicates, translating tone categories into plain-language guidelines, and drafting dataset documentation. In practice, that means the model often saves more time during cleanup and organization than during raw generation.

A more sobering experience comes from people working with sensitive or domain-specific content. They learn quickly that synthetic data is not the same thing as risk-free data. If the prompt is too detailed, private patterns can sneak in. If the domain is highly technical, the model may produce language that sounds correct while quietly missing the point. That is why expert review matters most in healthcare, finance, legal work, and compliance-heavy environments. A dataset can look complete while still being wrong in all the expensive places.

Finally, experienced practitioners almost always arrive at the same conclusion: the best dataset workflows are iterative. They generate a little, inspect a lot, revise prompts, rebalance classes, add edge cases, and document what changed. They do not worship the first output. They shape it. Once that mindset clicks, ChatGPT becomes genuinely useful. Not because it eliminates data work, but because it removes a large amount of repetitive effort and leaves humans with the part they should own anyway: deciding what “good” actually means.

Conclusion

ChatGPT can absolutely help you create a dataset step by step, but the secret is structure. The more clearly you define the job, the more valuable the output becomes. Treat the model like a fast assistant with a tendency to improvise, and you will get speed without surrendering quality. Treat it like an all-knowing oracle, and your dataset may end up looking confident, consistent, and gloriously wrong.