Table of Contents >> Show >> Hide

- Why a Hard-Coded Root Password Is Such a Big Deal

- Default Passwords, Hard-Coded Passwords, and Hidden Accounts Are Not the Same Thing

- The Pattern Has Shown Up Again and Again in IoT Cameras

- When a Camera Flaw Becomes Everyone’s Problem

- Why Do Vendors Keep Making This Mistake?

- What Consumers and Businesses Should Do Right Now

- What Manufacturers Should Have Been Doing All Along

- Experiences From the Field: What This Looks Like in Real Life

- Conclusion

Note: This article is for security awareness and defensive best practices only. It does not include exploit instructions.

Smart cameras are supposed to watch the driveway, the stockroom, the nursery, or the office lobby. What they are not supposed to do is quietly moonlight as the weakest link on your network. Yet that is exactly what happens when researchers crack open the security of an internet-connected camera and discover the digital equivalent of a spare house key taped under the doormat: a hard-coded root password.

That phrase sounds technical, but the idea is brutally simple. A root password is the highest level of access on a device. Hard-coded means it is built into the product itself, often buried in firmware, impossible or impractical for the customer to change. In plain English, someone, somewhere, shipped a camera with a secret master key baked into it. And if one researcher can find it, a criminal can too.

Over the years, security researchers and journalists have repeatedly found this kind of flaw in cameras, DVRs, and other IoT gear. The names change, the logos change, the packaging gets shinier, but the plot twist stays weirdly familiar: hidden credentials, default accounts, weak remote access, and firmware that behaves like security was added five minutes before launch. That pattern is why this issue matters far beyond one camera model. It tells a bigger story about how fragile connected devices can be when convenience beats security.

Why a Hard-Coded Root Password Is Such a Big Deal

A normal password can be changed. A bad password policy can be improved. But a hard-coded root password is a different beast. It is not just weak security. It is structural insecurity. The problem lives inside the product’s design, which means the customer may never truly control the device they bought.

That matters because “root” access usually means full control. A person with that level of access may be able to change settings, enable remote services, modify network behavior, view or redirect video, install persistent changes, or use the device as a foothold into other systems on the same network. Suddenly the camera is not just a camera. It is a tiny, internet-connected computer with a front-row seat inside a home or business.

MITRE classifies hard-coded credentials as a serious weakness for a reason. Once a built-in secret is discovered, it can be reused across many devices. That creates scale. One vulnerable gadget is a headache. Thousands of vulnerable gadgets running the same hidden credentials become an ecosystem-wide problem. That is how a private design mistake can turn into a public security mess.

Default Passwords, Hard-Coded Passwords, and Hidden Accounts Are Not the Same Thing

These terms often get tossed around like they all mean “bad password,” but they are not identical.

Default Passwords

These are factory-set credentials that the user is expected to change. They are risky because many people never bother, or the setup process makes changing them annoyingly difficult. Think of the old “admin/admin” era, where attackers barely needed a keyboard and a pulse.

Hard-Coded Passwords

These are built into the software or firmware. Users may not know they exist, may not be able to see them in the interface, and often cannot change them. This is where things go from sloppy to alarming.

Hidden Debug or Backdoor Accounts

These are service accounts left behind for testing, maintenance, or engineering convenience. Vendors may call them “support tools.” Attackers call them “thank you very much.”

In practice, these categories often overlap. A product may ship with weak defaults, undocumented services, and a hidden administrative account all at once. That is not defense in depth. That is vulnerability in layers.

The Pattern Has Shown Up Again and Again in IoT Cameras

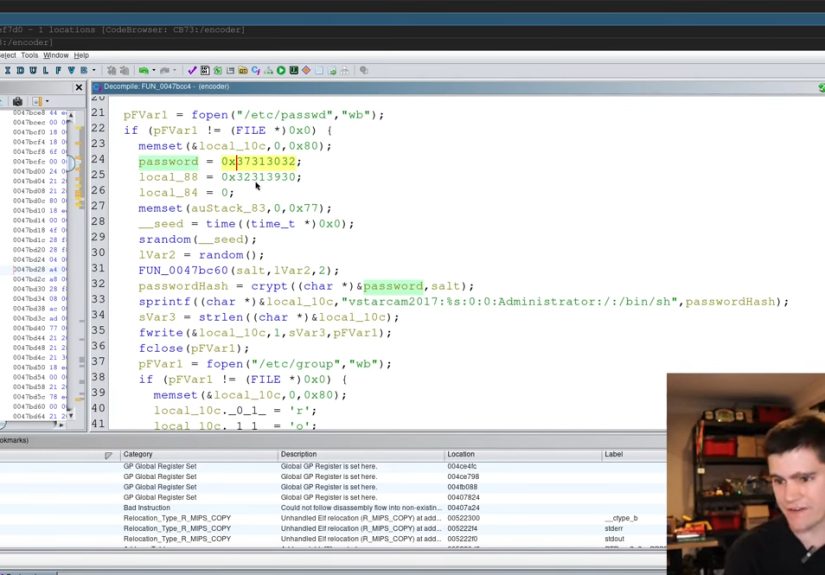

Security reporting over the last decade shows that camera-related IoT flaws are not one-off oddities. Researchers have found hidden credentials in surveillance DVRs, backdoor-style password issues in IP cameras, and insecure video products that could expose live streams or administrative controls. In some cases, the vulnerable firmware was reused across brands, which meant one core weakness spread outward through multiple labels and resellers.

One widely discussed problem involved surveillance video recorders whose web interfaces could be accessed using credentials embedded in firmware. Another case involved Sony IP cameras where researchers found hard-coded password material tied to privileged accounts, along with the kind of debug functionality that security teams hate and attackers adore. D-Link cameras were also reported to have a built-in password issue that opened the door to live video access. The lesson is not that one brand had a bad week. The lesson is that the market repeatedly tolerated designs that should never have made it to customers.

Consumer Reports has also highlighted serious security problems in connected video doorbells, reminding buyers that low-cost smart-home hardware can carry very expensive security consequences. And the concern is no longer limited to homes. EFF has pointed to serious vulnerabilities in camera systems used for automated license plate recognition, where weak or default-style access can create risks not just for privacy, but for public safety and infrastructure trust.

When a Camera Flaw Becomes Everyone’s Problem

It is easy to think, “So what? It is just a camera.” Unfortunately, that is how a lot of big messes begin.

Privacy Exposure

This is the most obvious risk. If a camera is compromised, attackers may be able to view live feeds, peek at recorded footage, or track routines inside homes and businesses. That is not merely creepy. It can expose children, customers, staff, physical layouts, delivery patterns, and security procedures.

Network Foothold

Cameras sit on networks. Once compromised, they may give intruders a platform from which to scan or probe other systems. A cheap camera can become the most affordable beachhead in a much more valuable environment.

Botnets and DDoS Attacks

The Mirai era made this painfully clear. Massive botnets were built by sweeping up poorly secured IoT devices, including cameras and DVRs, and using default or widely known credentials to bring them under control. Consumers may have bought a camera to monitor the front porch, only to discover it had joined a cyber riot on the wider internet.

Operational and National Security Risks

More recent reporting has shown that compromised IP cameras are now viewed not just as a botnet ingredient, but as intelligence assets. In other words, insecure cameras can become eyes and ears for someone else. That pushes the threat beyond nuisance, embarrassment, or bandwidth abuse. It edges into physical security and strategic risk.

Why Do Vendors Keep Making This Mistake?

Because shipping secure products is hard, and shipping insecure products is often faster. That is the uncomfortable truth.

Many IoT products are developed under intense price pressure. Vendors reuse chipsets, software stacks, and firmware components across multiple products. White-label manufacturing is common. Support windows can be short. Security teams are small. Quality assurance may focus on whether the camera streams video, not whether it quietly contains a buried administrative skeleton.

Debug accounts are especially tempting during development. Engineers want a fast way to test or recover devices. The problem is that temporary shortcuts have a terrible habit of becoming permanent features. Once a hidden account ships in production, it stops being an engineering convenience and starts being an attacker convenience.

There is also a user-experience problem. Some vendors assume customers hate setup friction, so they avoid forcing strong credential changes during onboarding. That may reduce return rates in the short term, but it creates long-term risk. Security that depends on customers doing everything right, immediately, without guidance, is not really security. It is optimism with LEDs.

What Consumers and Businesses Should Do Right Now

You cannot rewrite a vendor’s firmware from your kitchen table, but you can reduce your exposure.

1. Change what you can change

Replace factory usernames and passwords immediately. If the product makes that difficult, take that as a warning sign, not a fun puzzle.

2. Turn on automatic updates

If a vendor provides security patches, install them. If the device no longer receives updates, it may be aging into “works fine, insecure forever” territory.

3. Use two-factor authentication when available

Especially for the cloud account tied to the camera app. Many camera “hacks” in headlines are really account compromises made easier by weak password reuse.

4. Put IoT devices on a separate network

The FBI and FTC both emphasize basic network hygiene. A guest network or segmented network can help keep a camera problem from becoming a laptop problem.

5. Disable features you do not need

Remote administration, unused services, universal plug and play, extra cloud integrations, and forgotten app permissions all expand the attack surface.

6. Check the support period before you buy

An unsupported camera is basically a time capsule with Wi-Fi. The new U.S. Cyber Trust Mark effort is meant to make this kind of information easier to evaluate, including how to change passwords and whether updates are automatic.

What Manufacturers Should Have Been Doing All Along

The industry does not need a magic spell here. It needs discipline.

- Do not ship universal default passwords.

- Do not bury service credentials in firmware.

- Require secure setup during first use.

- Remove debug accounts from production builds.

- Provide signed, reliable update mechanisms.

- Publish clear support timelines.

- Maintain a vulnerability disclosure program.

- Design products to be secure by default, not secure by customer luck.

CISA’s secure-by-design push and NIST’s IoT guidance both point in the same direction: security has to be built into the product, not outsourced to the end user after the fact. That includes authentication, updateability, logging, configuration control, and the boring-but-essential engineering work that prevents one hidden credential from becoming a headline.

Experiences From the Field: What This Looks Like in Real Life

Talk to enough security researchers, IT admins, and smart-home owners, and a pattern emerges that is almost comically consistent. The first experience is disbelief. Someone buys a camera from a recognizable marketplace, plugs it in, scans a QR code, watches the video feed pop up, and thinks, “Great, done.” Then a security report lands. Or the vendor emails about a critical update. Or the device starts making unexplained outbound connections. Suddenly the buyer realizes they did not just purchase a camera. They adopted a tiny networked computer with a lens and a trust problem.

Small businesses often have the roughest version of this experience. They install cameras for practical reasons: shrinkage, safety, after-hours monitoring, insurance. The system works, nobody complains, and the gear quietly becomes part of the building’s nervous system. Months later, an IT consultant reviews the network and finds old firmware, reused passwords, remote access still enabled, and devices sitting on the same segment as office PCs. Nobody intended to build a security mess. It just happened the way clutter happens in a garage: one “temporary” shortcut at a time.

Security researchers describe another recurring experience: the vulnerability itself is often less surprising than the vendor response. The technical issue may be obvious, but the patch cycle can be painfully slow. Some vendors fix quickly and communicate clearly. Others act as though the problem is a public-relations inconvenience rather than a design flaw that affects real people. That gap matters. A camera in a family kitchen or a daycare hallway is not a harmless gadget when its security fails.

Home users tell a similar story, just with more confusion and fewer acronyms. They assume the mobile app equals security. They assume a product sold by a major retailer has been vetted. They assume “password protected” means truly protected. Then they discover how much security depends on things they were never asked to think about: firmware support, cloud architecture, hidden services, default permissions, and whether the manufacturer still exists six months later. It is a rough lesson in how modern convenience often arrives with invisible maintenance requirements.

The most useful experience, though, comes after the panic. People who go through one IoT security scare tend to become much more disciplined buyers. They start asking better questions. Does this vendor publish security information? How long is support promised? Can I change credentials easily? Are updates automatic? Can I isolate this device on a guest network? That shift is healthy. It turns security from an afterthought into a shopping criterion.

In that sense, every hard-coded password story is not just a cautionary tale. It is a reminder that connected devices are part of real-world security now. Cameras are no longer simple appliances. They are software products with lenses attached. Once buyers, businesses, retailers, and regulators fully absorb that truth, the market gets better. Until then, every new report about a hidden root password will feel less like shocking news and more like the latest rerun of a show nobody asked to renew.

Conclusion

The headline may sound dramatic, but the underlying lesson is straightforward: when an IoT camera contains a hard-coded root password, the problem is not just one bug. It is a failure of product design, security culture, and accountability. These flaws can expose private spaces, enable botnets, create footholds into larger networks, and turn everyday devices into long-term liabilities.

The good news is that the industry finally has more pressure to improve. Security labels, secure-by-design principles, baseline standards, and stronger public scrutiny are all pushing vendors in the right direction. But consumers and businesses still need to shop carefully, configure wisely, and treat every connected camera like what it really is: a computer that happens to watch things.

And that is the punchline nobody wanted. The gadget sold as “plug-and-play peace of mind” can become “plug-and-pray cybersecurity” when manufacturers cut corners. A camera should help you sleep better at night, not give your network insomnia.