Table of Contents >> Show >> Hide

- What Is a Cryptoprocessor, Anyway?

- What Does “Defeating” a Cryptoprocessor With Laser Beams Really Mean?

- Why Attack the Hardware Instead of the Math?

- How Laser Fault Injection Works at a High Level

- What Makes These Attacks So Important to Security Teams?

- Real-World Lessons From Modern Hardware Security

- How Engineers Defend Cryptoprocessors Against Laser and Fault Attacks

- Why This Topic Matters Beyond Semiconductor Labs

- The Future of Hardware Security: More Layers, Less Wishful Thinking

- Final Thoughts

- Experiences and Observations Related to “Defeating A Cryptoprocessor With Laser Beams”

- SEO Tags

It sounds like a movie trailer voice-over: “In a world where your data is protected by advanced cryptography, one tiny beam of light changes everything.” Dramatic? Yes. Fiction? Not quite.

In hardware security, the phrase “defeating a cryptoprocessor with laser beams” refers to a real class of physical attacks in which researchers use precise optical fault injection to disturb a chip at exactly the wrong moment. The goal is not to melt the processor into a tiny puddle of sadness. The goal is to create a controlled error, or fault, that causes the chip to behave differently than intended. Sometimes that means skipping a check. Sometimes it means corrupting data. Sometimes it means turning a carefully designed trust boundary into a very expensive suggestion.

That is what makes this topic so fascinating. Modern cryptoprocessors are built to guard secrets: encryption keys, secure boot chains, authentication credentials, and the integrity of sensitive operations. Yet when attackers or researchers can influence the hardware itself, they may not need to “break” the cryptographic math at all. They can go after the implementation instead. In other words, they stop arguing with the lock and start kicking the doorframe.

This article explores what laser-based attacks on cryptoprocessors really mean, why they matter, what they reveal about hardware trust, and how security engineers are designing systems that are harder to tamper with. We will keep it informative, practical, and readable. No lab playbook, no sci-fi nonsense, and no pretending your average coffee maker needs military-grade anti-laser armor.

What Is a Cryptoprocessor, Anyway?

A cryptoprocessor is a chip or hardware block designed to perform security-sensitive tasks. These tasks can include encryption, decryption, key storage, digital signatures, secure boot validation, authentication, and random number generation. Some cryptoprocessors live in dedicated hardware security modules, smart cards, payment systems, or secure elements. Others are embedded inside larger systems such as laptops, phones, servers, vehicles, and industrial devices.

The reason organizations trust cryptoprocessors is simple: hardware is supposed to be harder to tamper with than software. A properly designed security chip can isolate secrets, restrict access, detect tampering, and erase sensitive values when something looks suspicious. That is the dream.

The catch is that hardware exists in the physical world, and the physical world is rude. It has heat, voltage, electromagnetic interference, probing tools, and, yes, lasers.

What Does “Defeating” a Cryptoprocessor With Laser Beams Really Mean?

In security research, a laser attack usually falls under the broader category of fault injection. Fault injection means deliberately causing a device to make a mistake. The attacker does not necessarily read the key directly from memory like some Hollywood hacker with dramatic sunglasses. Instead, the attacker tries to nudge the chip into producing an incorrect intermediate state or output that leaks useful information or bypasses a security decision.

Think of it like interrupting a referee right as they are making a call. If the interruption happens at the right moment, the wrong decision may be recorded, even though the rulebook never changed.

Laser fault injection is especially interesting because it can be highly targeted. A narrow optical stimulus can affect a very small region of silicon, which makes it appealing for attacks against security checks, memory cells, control logic, or critical execution paths. In many research contexts, the attacker first exposes part of the chip package so the silicon can be influenced more directly. That alone tells you something important: these are not casual attacks. They are specialized, physical, and usually aimed at high-value targets.

Why Attack the Hardware Instead of the Math?

Because the math is often the hard part.

Strong cryptographic algorithms such as AES and modern digital signature schemes are designed to resist direct cryptanalysis. Breaking them mathematically can be far less practical than exploiting the way they are implemented in real systems. A chip that stores keys perfectly but mishandles a fault condition can still lose the game.

This is why hardware security experts spend so much time thinking about side-channel attacks, tamper resistance, secure boot, and roots of trust. A cryptoprocessor is not secure simply because it runs cryptography. It is secure only if the entire implementation behaves safely under stress, error, and malicious interference.

That distinction matters for everyone from chip vendors to cloud providers. A secure processor that can be tricked into skipping a verification step is like a bank vault with a world-class door and a suspiciously open window.

How Laser Fault Injection Works at a High Level

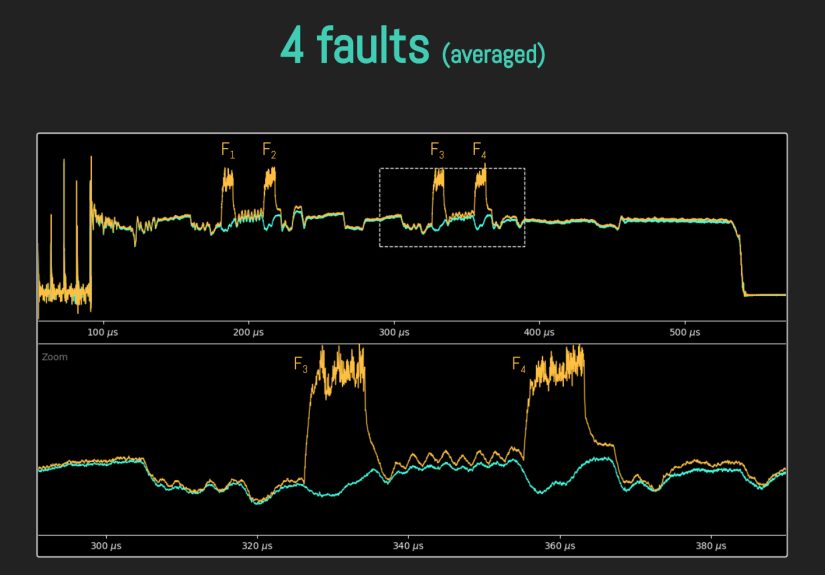

At a high level, the idea is straightforward: optical energy interacts with semiconductor structures and can disturb normal chip behavior. If that disturbance lands at the right place and moment, it may flip a bit, alter logic behavior, or cause an instruction or check to misfire.

Timing Is Everything

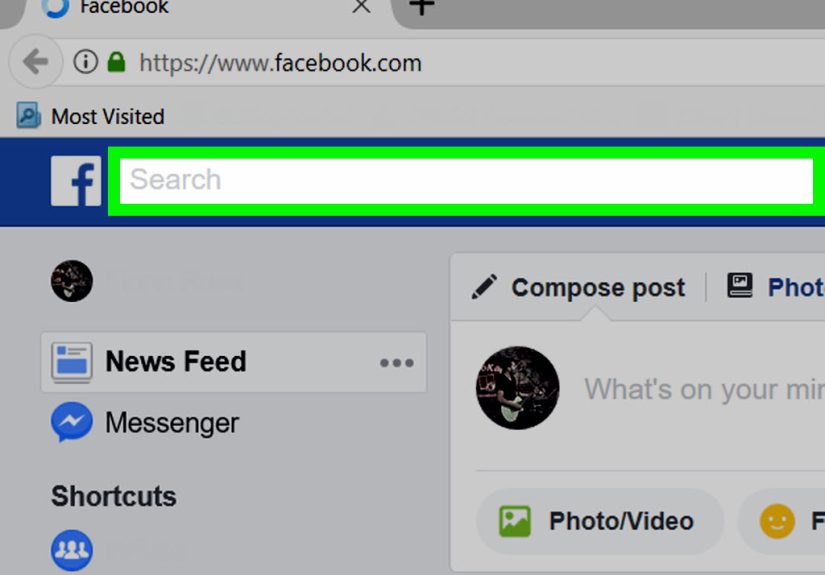

Security-critical operations often happen in tiny windows of time. A processor might compare a signature, validate a boot image, check a privilege bit, or confirm whether a command should be accepted. If the computation is disturbed during one of those moments, the result can be wrong in a way that benefits an attacker.

Precision Matters

Unlike blunt-force environmental attacks, laser-based methods are valued in research because they can be localized. The attacker is not just shouting at the chip. They are trying to whisper in exactly the wrong transistor’s ear.

The Package Is Part of the Battle

Chip packaging, coatings, shields, opaque layers, and tamper-detection features all complicate physical attacks. That is why defensive design is such a big deal. Good packaging is not glamorous, but neither is losing your root of trust to a glorified flashlight with a PhD.

What Makes These Attacks So Important to Security Teams?

Laser-based attacks matter because they expose a truth many security programs learn the hard way: trust in hardware is conditional. If a device can be induced to misbehave physically, then secure storage, authentication, encryption, and boot integrity may all be undermined without a traditional software exploit.

That has major implications in several areas:

Secure Boot and Firmware Integrity

If a hardware root of trust fails during boot verification, an attacker may be able to load code that should never run. This turns physical fault attacks into a serious concern for platform integrity, embedded devices, and supply-chain-sensitive environments.

Key Protection

Cryptographic keys are valuable precisely because they are supposed to remain inaccessible. Fault attacks can sometimes help reveal information about those keys indirectly by observing how a device behaves under induced errors.

Certification and Compliance

For regulated products, passing algorithm validation is not enough. Physical security, tamper evidence, zeroization behavior, environmental protections, and broader module validation all become part of the story.

High-Value Targets

These attacks are most relevant when the prize is worth the effort: payment systems, identity hardware, government systems, trusted platform components, embedded controllers, industrial systems, secure elements, and advanced compute platforms. Nobody is setting up an optical fault lab to steal your sandwich order history. Probably.

Real-World Lessons From Modern Hardware Security

One reason this topic stays relevant is that physical fault attacks are not frozen in academic history. They continue to shape real-world security thinking. Researchers and vendors still discuss fault injection in the context of secure boot, trusted execution, post-quantum cryptography, and hardware roots of trust.

That is an important point for executives and engineers alike. The lesson is not “chips are doomed.” The lesson is that secure hardware must be designed with an assumption of hostile physical conditions, not just ideal laboratory behavior. If your security architecture only works on polite silicon having a calm day, it is not much of an architecture.

Modern threat models increasingly include physical attacks alongside software exploitation, supply-chain risks, and implementation weaknesses. That broader view helps explain why secure hardware design now emphasizes layered protections rather than magical single-point defenses.

How Engineers Defend Cryptoprocessors Against Laser and Fault Attacks

The good news is that hardware designers are not standing around waiting to be roasted by photons. Over the years, they have developed multiple defensive strategies.

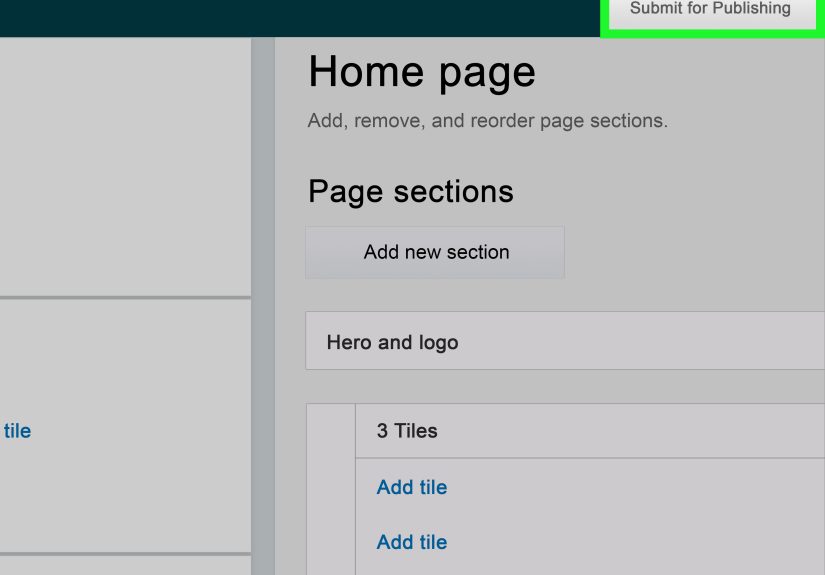

Tamper-Evident and Tamper-Responsive Design

Some modules are built to show evidence of physical interference. More advanced ones can detect tampering and trigger a response, including zeroization, which wipes sensitive plaintext secrets or key material. In high-assurance environments, the ability to fail safely matters as much as the ability to operate correctly.

Opaque Coatings, Shields, and Packaging

Protective coatings, encapsulation, potting materials, and tamper-detection envelopes make direct observation and stimulation harder. They do not make attack impossible, but they increase cost, complexity, and risk for the adversary. In security, making the attacker miserable is often a valid business objective.

Environmental Monitoring

Designers may monitor voltage, temperature, clock behavior, and related environmental conditions. If the chip detects abnormal conditions, it can halt, reset, deny access, or erase secrets. That helps defend not only against laser-assisted attacks, but also against clock glitches, voltage glitches, and other fault-inducing methods.

Redundancy and Error Detection

Security-critical computations may be performed with redundancy checks, repeated operations, or consistency tests so a single faulty result does not automatically become trusted. This is especially valuable when the attacker’s objective is to corrupt one decision at one critical moment.

Secure Architecture Choices

Countermeasures are not just packaging tricks. They also include architectural decisions: isolating sensitive functions, reducing debug exposure, protecting control-flow logic, hardening boot paths, validating state transitions, and limiting what happens if one block fails unexpectedly.

Why This Topic Matters Beyond Semiconductor Labs

You do not need to manufacture chips to care about this subject. If your business depends on trusted hardware, device identity, embedded systems, secure enclaves, or hardware-backed keys, physical attack resilience affects you too.

For security leaders, the bigger takeaway is strategic: implementation risk is real. The strongest algorithm in the world cannot save a weakly protected hardware implementation. If you buy secure devices, evaluate secure platforms, or rely on hardware security modules, ask better questions. What level of physical protection exists? What tamper response is documented? How is secure boot protected? What assumptions are being made about attacker access?

That kind of thinking separates mature security programs from teams that believe “hardware-backed” is a mystical spell that banishes all evil.

The Future of Hardware Security: More Layers, Less Wishful Thinking

The future of cryptoprocessor security is not about finding one perfect shield. It is about combining physical protection, operational monitoring, secure design practices, validation standards, and realistic threat modeling.

That includes support for new cryptographic algorithms, especially as post-quantum cryptography moves into practical deployment. A next-generation algorithm implemented insecurely in hardware is still an old problem wearing trendy shoes.

As chips become more central to trust decisions, the line between hardware engineering and cybersecurity keeps fading. Security teams increasingly need to understand both. The era of pretending the silicon is “someone else’s problem” is ending fast.

Final Thoughts

“Defeating a cryptoprocessor with laser beams” sounds theatrical, but the real lesson is grounded and useful. Security failures do not always come from broken algorithms or sloppy passwords. Sometimes they come from physical reality colliding with design assumptions.

Laser fault injection reminds us that a secure system is more than code and math. It is packaging, timing, monitoring, tamper response, architecture, and validation working together under pressure. That is why hardware security remains such a serious field: the secrets are tiny, the stakes are high, and the attackers do not always knock politely.

For engineers, this topic is a reminder to build layered defenses. For businesses, it is a reminder to evaluate hardware claims carefully. And for the rest of us, it is proof that somewhere in a lab, someone really did look at a chip and think, “What if I pointed a laser at that?”

Experiences and Observations Related to “Defeating A Cryptoprocessor With Laser Beams”

One of the most striking things about reading hardware security research is how often the “attack” is really an argument with assumptions. A design team assumes a check will always complete. A certification process assumes environmental boundaries are meaningful. A product manager assumes secure hardware is a box that can be ticked once and forgotten. Then a physical attack researcher arrives and politely demonstrates that assumptions, much like office plants, require ongoing care.

In many discussions around cryptoprocessor resilience, the most valuable experience is not the dramatic demo. It is the uncomfortable meeting after the demo. That is where engineering, security, compliance, and product teams all realize they were talking about the same device in very different ways. The hardware team saw timing paths and packaging constraints. The security team saw attack surfaces. The compliance team saw validation levels. The product team saw launch dates. The laser, rather unhelpfully, saw opportunity.

Another common lesson is that physical security is rarely solved by one feature. A shield without monitoring can be bypassed. Monitoring without response can be ignored. Response without sound architecture can become chaos with branding. The most resilient systems tend to come from organizations that treat hardware security as a layered discipline instead of a heroic accessory.

There is also a practical business lesson here. The organizations that take implementation security seriously often do so because they have already learned that “uses strong encryption” is not the same as “is hard to compromise.” That shift in mindset changes procurement, testing, red-team exercises, and product design. Suddenly, teams ask better questions: what happens under fault conditions, what secrets are exposed if a check fails, what gets erased, and what trust assumptions remain?

Perhaps the most relatable experience is the realization that advanced attacks often expose very ordinary weaknesses. Not because the physics is ordinary, but because the root problem is familiar: a critical decision was trusted too easily, a boundary was not monitored well enough, or a system assumed the world would behave. It never does. Not in software. Not in hardware. Definitely not around lasers.