Table of Contents >> Show >> Hide

- Why the 386 Needed Defensive Engineering at the Pin Level

- ESD: When Your Finger Becomes a Tiny Thunderstorm

- Latch-up: The Chip’s Accidental Self-Destruct Circuit

- Metastability: When a “1” Refuses to Commit

- The Bigger Lesson: The 386 Was Digital on the Inside, Analog at the Edges

- Experiences and Practical Lessons From Working Around These Problems

- Conclusion

- SEO Tags

The Intel 386 is usually remembered for the glamorous stuff: 32-bit computing, protected mode, serious PC performance, and the kind of chip swagger that made late-1980s computers feel like they had finally traded in sneakers for dress shoes. But if you look closely at the 386, the most fascinating story is not only about speed. It is about survival.

This processor had to survive static electricity from the outside world, prevent destructive latch-up inside its own CMOS structure, and cope with metastability when asynchronous signals arrived at just the wrong moment. In other words, the 386 was not just a CPU. It was a tiny silicon fortress with a moat, walls, and a nervous system that knew panic was not a design strategy.

That matters because the 386 sat right at a turning point in PC history. Introduced in 1985, it packed 275,000 transistors and ran at 16 MHz, which was a serious flex for its era. Yet all that ambition still depended on something almost embarrassingly fragile: tiny I/O pads around the edge of the die, where the clean logic world inside the chip met the chaotic real world outside it. And the real world, as every engineer eventually learns, is basically a prankster with a static charge.

Why the 386 Needed Defensive Engineering at the Pin Level

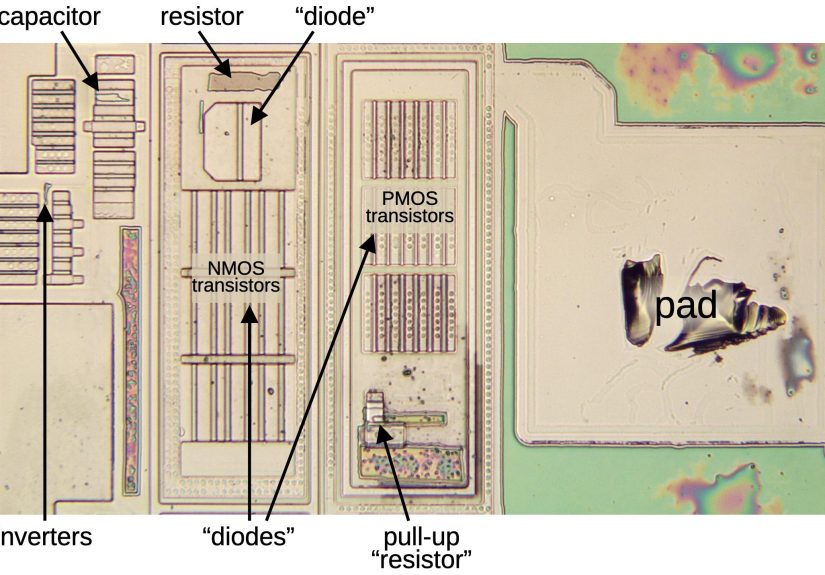

On paper, the 386 was a digital machine. In practice, its perimeter circuitry lived in a weird borderland where analog effects, parasitic structures, and timing uncertainty could ruin everyone’s day. Recent die-level reverse-engineering work has highlighted just how much area Intel devoted to these “unsexy” support circuits. Around the edge of the die were 141 bond pads, each serving as a doorway to the outside world. Every doorway needed a bouncer.

That is the key idea behind understanding how the 386 protects itself. The core logic gets the headlines, but the I/O structures do the dirty work. They clamp voltage spikes, steer current away from delicate transistors, isolate risky regions in the substrate, and synchronize unpredictable external signals before they can confuse the rest of the processor.

If that sounds like overkill, consider the alternatives. Without protection, a static discharge can punch through oxide, burn junctions, or damage metal. Without latch-up prevention, parasitic transistor structures can turn on like an accidental silicon thyristor and create a high-current short between supply rails. Without metastability mitigation, an external signal sampled at a bad instant can hover between logic states long enough to send downstream logic into contradictory interpretations. The result is not “quirky behavior.” The result is smoke, lockups, or the sort of intermittent bug that convinces grown engineers to stare into the middle distance.

ESD: When Your Finger Becomes a Tiny Thunderstorm

Electrostatic discharge, or ESD, is the first dragon at the gate. The concept is simple: charge builds up, finds a path, and discharges fast. For humans, that can mean a tiny zap from a doorknob. For integrated circuits, it can mean localized destruction. ESD damage is especially nasty because it can be immediate, partial, or latent. Sometimes a device dies on the spot. Sometimes it keeps working just well enough to betray you later.

Intel’s 386 tackled this problem with a layered protection approach at the pad level. A typical input included clamp diodes tied to the power rail and ground, plus a current-limiting resistor and an additional diode stage. The idea was straightforward and smart: if the voltage at a pad shot too high or too low, the clamp structures would redirect excess energy away from the fragile inverter deeper in the input path. The resistor reduced the current that could reach sensitive transistors. Think of it as redirecting a flood and then narrowing the pipe just in case some water still tries to rush through.

One of the most striking things about the 386’s ESD protection is how much silicon it consumed compared with the logic it was protecting. That was not inefficiency. That was realism. Engineers in that era understood that an I/O pad is not just a wire connection. It is an interface between civilized on-chip behavior and electrical chaos. The protection network had to be physically robust enough to absorb abuse that the logic itself could never survive.

Modern ESD literature still describes the same failure modes in different generations of silicon: oxide punch-through, junction burnout, and metallization damage. The names have not gotten any friendlier. What has changed is scale. As geometry shrinks, susceptibility often rises, making the 386’s chunky protection circuitry look less primitive and more like a wise old brick house that laughs at a storm while newer condos check the insurance policy.

Latch-up: The Chip’s Accidental Self-Destruct Circuit

If ESD is an external attack, latch-up is an inside job.

Latch-up happens because CMOS structures naturally contain parasitic PNP and NPN transistor paths. Under the wrong conditions, those parasitic elements can behave like a silicon-controlled rectifier, creating a low-impedance path between the power rails. Once triggered, the current can become self-sustaining. In plain English, the chip accidentally invents a short circuit in its own body. That is not a feature. That is the electrical equivalent of your smoke detector starting the fire.

The 386 fought latch-up with guard rings around vulnerable I/O structures. These guard rings were not decorative. They acted as charge-collection and isolation structures in the substrate and wells, helping to intercept injected carriers before they could trigger parasitic conduction paths. Reverse-engineering of the 386 shows a double guard-ring arrangement around typical I/O pads, with separate protection for NMOS and PMOS regions. That is an elegant layout choice because latch-up is not just a circuit problem; it is a geometry problem. Where carriers move matters. Where current spreads matters. Where the substrate can quietly betray you absolutely matters.

This is one of the best reminders that chip design is never purely schematic-level thinking. Layout is physics in a trench coat. You can draw a beautiful logical design, but if the physical implementation lets injected carriers wander into the wrong place, the silicon will write its own opinion in amperes.

Guard rings remain a classic defense because they create low-resistance paths that collect or shunt unwanted charge. TI and Cadence still describe them in much the same spirit: use them to isolate devices, sink carriers, and reduce the likelihood that substrate noise or injected current will wake up parasitic structures. IBM’s long-running latch-up work tells the same bigger story from a technology perspective. Scaling changes device behavior, but latch-up never truly leaves the conversation; it just gets a new badge and a smaller pitch.

That is why the 386 deserves credit. Intel did not simply trust that normal CMOS behavior would be good enough. The chip treated latch-up as a real threat and burned real die area to prevent it. In a world where every square micron costs money, that says a lot.

Metastability: When a “1” Refuses to Commit

The third problem is less likely to physically destroy the chip, but it can absolutely wreck correct operation. Metastability occurs when a flip-flop samples a signal that changes too close to the active clock edge. Instead of settling cleanly to a logic 0 or 1, the output can linger in an in-between state for an unpredictable amount of time. This does not mean the laws of digital logic failed. It means you have smacked into the analog reality hiding underneath digital abstractions.

Designers deal with this by respecting setup and hold times and by synchronizing asynchronous inputs. But external signals do not always play nice. Interrupts, coprocessor handshakes, and other off-chip events do not necessarily arrive in perfect alignment with the CPU clock. So the 386 included special circuitry on certain inputs to reduce metastability risk before those signals entered the core logic.

The classic solution is a two-flip-flop synchronizer, and the 386 used that strategy. The first stage samples the asynchronous event. If it goes metastable, there is still a chance for the signal to resolve before the second stage samples it. Each additional stage drastically lowers the odds that metastability will propagate far enough to matter. It does not make the probability zero. It makes the mean time between failures long enough that normal humans can get on with their lives.

What makes the 386 especially interesting is that reverse-engineering suggests Intel did not settle for an ordinary first stage on some of these inputs. Instead, the first flip-flop appears to use a sense-amplifier-based structure that can resolve ambiguous input differences more aggressively than a conventional latch. That is a wonderfully practical idea: if metastability is a hesitation problem, use a circuit that is very good at turning tiny differences into decisive outcomes quickly.

It is a beautiful little lesson in engineering humility. The 386 did not pretend asynchronous inputs could be made synchronous by wishful thinking. It accepted that uncertainty happens, then gave the signal a safe place to calm down before it was allowed into the main logic party.

The Bigger Lesson: The 386 Was Digital on the Inside, Analog at the Edges

This is the part that makes the 386 story so fun. Most people imagine a CPU as a monument to clean binary order. Ones. Zeros. Crisp edges. Firm decisions. But the protection circuitry shows that at the chip boundary, reality gets messy. Voltages overshoot. Carriers wander. Signals arrive late. Physics refuses to read the marketing brochure.

That is why the 386’s pad circuitry is so revealing. The processor’s real toughness did not come only from instruction sets and transistor count. It came from defensive engineering that acknowledged ugly failure modes and designed around them. ESD networks, guard rings, and synchronizers are not glamorous, but they are the reason glamorous things keep working.

In that sense, the 386 still feels modern. Today’s chips use more advanced ESD cells, more sophisticated latch-up rules, and far more rigorous clock-domain-crossing analysis, but the playbook is recognizable. Clamp the surge. Isolate the substrate. Synchronize the asynchronous. Respect analog behavior even when the product brochure is screaming “digital.”

Experiences and Practical Lessons From Working Around These Problems

Anyone who has spent time with vintage boards, lab prototypes, or sensitive digital systems quickly learns that failures related to ESD, latch-up, and metastability rarely announce themselves politely. They usually show up wearing disguises.

An ESD-related problem, for example, does not always look dramatic. Sometimes nothing visibly burns, nothing explodes, and no one gets the courtesy of a heroic pop sound. Instead, a board that worked yesterday starts failing in strange ways. A bus line becomes flaky. An input behaves just slightly wrong. A device passes a quick test and then refuses to boot after sitting overnight. That is part of what makes ESD so maddening in real life: the damage can be tiny, localized, and delayed. Engineers often talk about static precautions with almost religious seriousness because once you have chased one invisible ESD injury for two days, the anti-static wrist strap stops feeling like a suggestion and starts feeling like civilization.

Latch-up has its own personality. It often feels like a betrayal by the chip itself. A system powers up, current suddenly spikes, something gets hotter than it should, and the behavior can disappear as quickly as it arrived. That intermittent nature is what makes latch-up so spooky on the bench. A novice might blame the power supply, bad firmware, or “just one weird test.” An experienced engineer starts wondering whether injected current, poor decoupling, or layout weaknesses are waking up parasitic structures. You learn very quickly that silicon has hidden pathways, and hidden pathways love public embarrassment.

Metastability is even sneakier because it often hides inside timing corner cases. The system works a thousand times, then fails once when two events align badly. Maybe a handshake crosses a clock boundary at exactly the wrong instant. Maybe a peripheral response arrives just close enough to a sampling edge to create trouble. The bug feels random, but it is not random; it is statistical. That distinction matters. Once engineers internalize that metastability is a probability problem, not a fairy tale, their design habits improve. They stop trusting raw asynchronous inputs. They add synchronizers. They stretch pulses. They use FIFOs or handshakes where appropriate. They stop hoping the universe will be in a good mood.

The 386 is a great teaching example because it makes these lessons visible. It reminds us that robust chips are not built by assuming ideal conditions. They are built by assuming the outside world is noisy, the substrate is mischievous, and timing uncertainty is always waiting in the parking lot. That mindset is still useful whether you are debugging a retrocomputer, laying out a modern ASIC, or trying to explain to a new engineer why “it only failed once” is never as comforting as it sounds.

Conclusion

Intel’s 386 protected itself the same way good engineers still protect complex systems today: with layers, margin, and a healthy distrust of the real world. Its ESD network clamped dangerous voltage surges before they could reach fragile logic. Its guard rings helped keep latch-up from turning parasitic transistor structures into a power-rail disaster. Its synchronizer circuitry reduced the chance that asynchronous inputs would drag the processor into metastable confusion.

That is what makes the 386 more than a famous CPU from the PC boom years. It is a reminder that great chip design is not only about performance. It is about staying alive long enough to deliver that performance reliably. And honestly, that may be the most silicon thing ever: before you can be brilliant, you first have to avoid being fried, shorted, or confused.